Ever surprise why ChatGPT speaks English higher than, say, Swahili or Arabic?

It’s not an accident, or some particular favorability of English within the coaching knowledge; it’s math. AI firms have been flying blind when constructing fashions for non-English languages, guessing at how a lot knowledge to make use of and which languages to coach collectively.

Google’s analysis workforce simply revealed ATLAS (paper), the biggest public research on multilingual AI coaching. They ran 774 experiments throughout 400+ languages to reply questions which have stumped builders: How a lot greater ought to your mannequin be if you wish to assist 50 languages as an alternative of 10? Which languages truly assist one another throughout coaching?

The key breakthrough

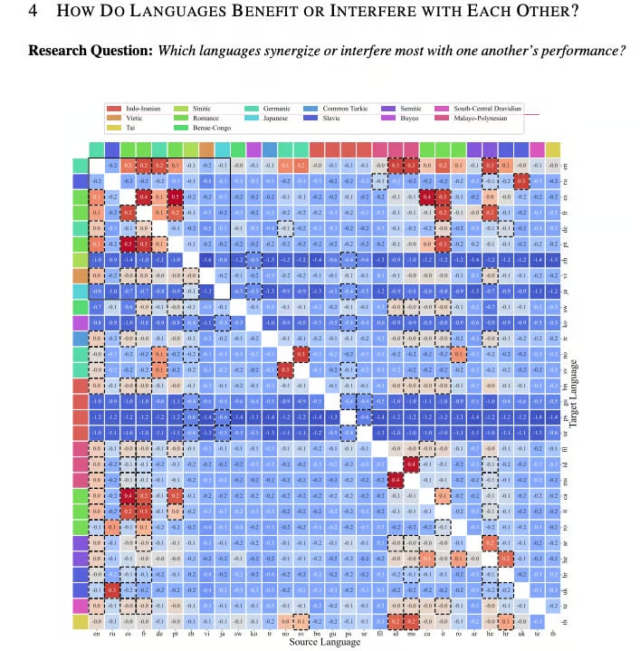

ATLAS creates a “transfer matrix” displaying which languages increase one another’s efficiency. Norwegian improves once you practice it alongside Swedish and German. Malay advantages from Indonesian. Arabic will get higher with Hebrew. The sample? Languages that share the identical alphabet and language household assist one another most.

Three sensible instruments ATLAS gives:

- Scaling calculator: If you need to double your language assist (from Okay to 2K languages), enhance mannequin measurement by 1.18x and complete knowledge by 1.66x.

- Language pairing information: A warmth map displaying which languages work finest collectively; English, French, and Spanish assist probably the most languages total.

- Pre-train vs. fine-tune determination: A components displaying when to begin from scratch versus constructing on an present multilingual mannequin (normally between 144-283 billion tokens for two billion parameter fashions).

They additionally tackled the “curse of multilinguality”, or the truth that including extra languages sometimes hurts efficiency. Good information: the curse is actual, however gentle. Languages sharing scripts create sufficient constructive synergy to offset most capability constraints.

Why this issues

Over 50% of AI customers converse non-English languages, however scaling legal guidelines have been overwhelmingly English-focused. Developers constructing multilingual AI have been making costly guesses about mannequin measurement and coaching knowledge.

ATLAS offers them a data-driven playbook. Expect the subsequent wave of multilingual fashions to truly work effectively in languages past English, as a result of firms now know precisely how you can allocate compute price range throughout languages effectively.

What’s subsequent: Model builders at firms like Anthropic, OpenAI, and Google will doubtless undertake these scaling rules over the subsequent 6-12 months (perhaps the Chinese labs will as effectively!). If you’re constructing or evaluating multilingual AI merchandise, examine which languages they prioritized in coaching; ATLAS reveals these decisions have measurable influence.

Editor’s notice: This content material initially ran within the e-newsletter of our sister publication, The Neuron. To learn extra from The Neuron, join its e-newsletter right here.

The put up Major Breakthrough: Google Cracked the Code for Building AI in 400+ Languages appeared first on eWEEK.